Instagram to Alert Parents on Teens' Searches for Self-Harm and Suicide

Parents utilizing Instagram's child supervision tools will soon receive notifications if their teenager repeatedly searches for terms related to suicide or self-harm on the platform.

This marks the first instance where Meta, Instagram's parent company, will proactively inform parents about their child's searches for harmful content, rather than solely blocking such searches and directing users to external support resources.

Parents and teens participating in Instagram's Teen Accounts experience in the UK, US, Australia, and Canada will begin receiving these alerts starting next week, with plans to extend the feature globally at a later date.

Criticism from Suicide Prevention Charity

However, the suicide prevention charity, the Molly Rose Foundation, has strongly criticized the new measures, cautioning that they "could do more harm than good."

"This clumsy announcement is fraught with risk and we are concerned that forced disclosures could do more harm than good," said Andy Burrows, the foundation's chief executive.

The Molly Rose Foundation was established by the family of Molly Russell, who died by suicide in 2017 at age 14 after viewing self-harm and suicide content on platforms including Instagram.

Burrows added,

"Every parent would want to know if their child is struggling, but these flimsy notifications will leave parents panicked and ill-prepared to have the sensitive and difficult conversations that will follow."

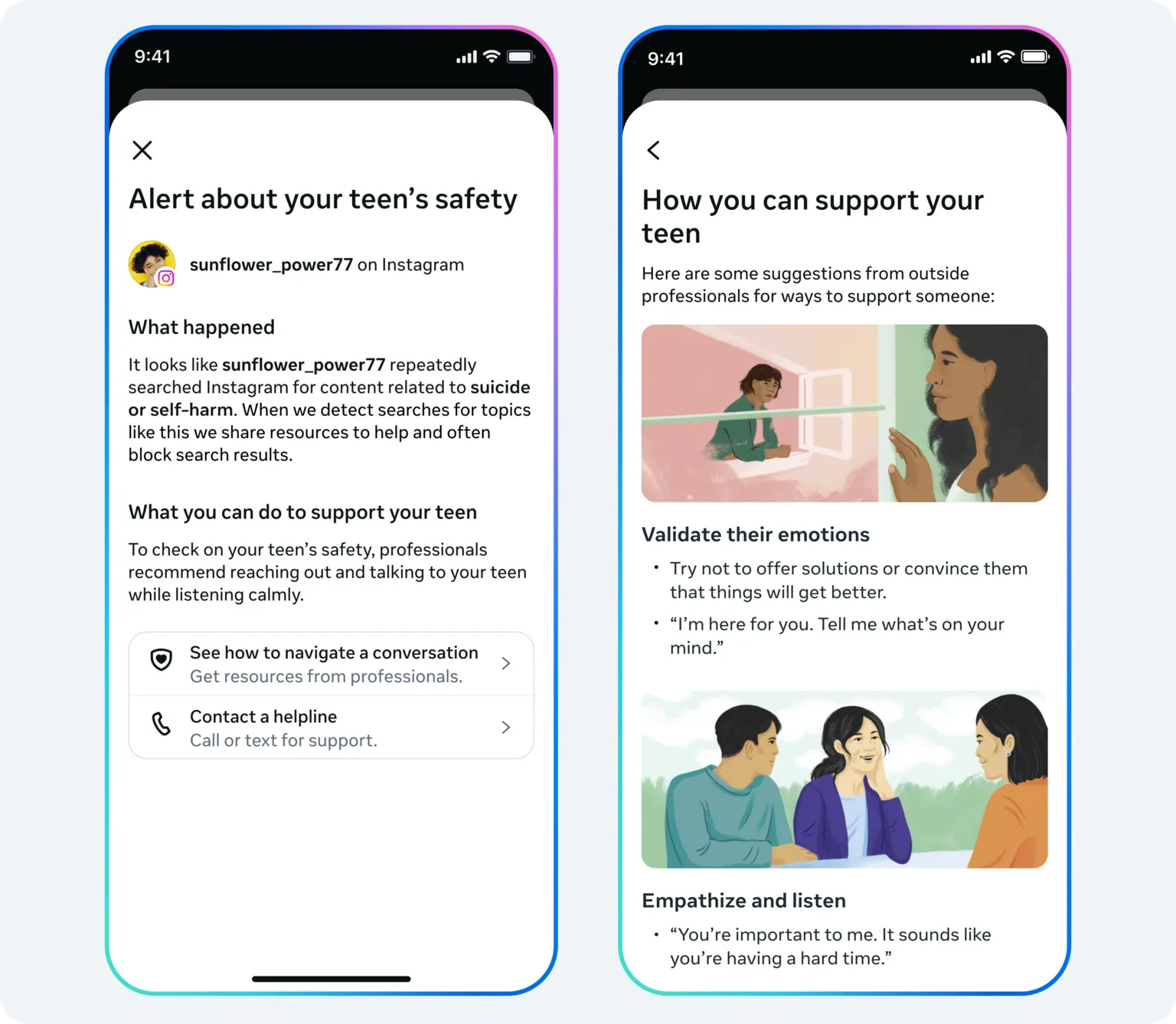

Meta states that alerts sent to parents regarding their child's searches for suicide and self-harm material within a short timeframe on Instagram will be accompanied by expert resources designed to assist parents in managing these challenging discussions.

Nonetheless, Burrows referenced prior research conducted by the Foundation indicating that Instagram still "actively" recommends harmful content related to depression, suicide, and self-harm to "vulnerable young people."

"The onus should be on addressing these risks rather than making yet another cynically timed announcement that passes the buck to parents," he said.

Meta disputed the Foundation's findings published last September, asserting that the report "misrepresents our efforts to empower parents and protect teens."

Increased Scrutiny and New Alert Features

Instagram's Teen Account alerts are intended to notify parents if there is a sudden change in their child's behaviour and search patterns on the platform.

Meta explained in a blog post that these measures build upon Instagram's existing protections for teens, which include hiding content related to suicide or self-harm and blocking searches for harmful or dangerous material.

Alerts will be delivered to parents via email, text message, WhatsApp, or directly through the Instagram app, depending on the contact information Meta holds for each family.

Meta acknowledged that Instagram's new alerts—based on analysis of user search patterns—may occasionally notify parents when there is no actual cause for concern, emphasizing that the system will "err on the side of caution."

Additionally, Meta plans to extend similar alerts in the coming months to cover instances where teens discuss self-harm and suicide with AI chatbots on Instagram, recognizing that children "increasingly turn to AI for support."

Global Pressure on Social Media Safety for Children

Social media companies face mounting pressure from governments worldwide to enhance the safety of their platforms for children.

Earlier this year, Australia implemented a ban on social media use for individuals under 16 years old, with countries including Spain, France, and the UK considering comparable regulations.

Meanwhile, regulators and lawmakers are closely examining the business practices of major technology companies concerning young users.

Meta CEO Mark Zuckerberg and Instagram head Adam Mosseri recently appeared in court in the United States to defend the company against allegations that it targeted younger users.

If you have been affected by the issues discussed in this article, help and support are available via BBC Action Line.

for our Tech Decoded newsletter to follow the world's top tech stories and trends. Outside the UK? here.